400G Optical Transceiver Are Manufactured for Datacenters

Nov 10, 2025|

Nov 10, 2025|

Hyperscale datacenter operators deployed over 20 million 400G and 800G optical modules in 2024, marking an inflection point in network infrastructure evolution. This massive adoption reflects a fundamental shift: power efficiency per transmitted bit now outweighs upfront hardware costs in procurement decisions. The 400G optical transceiver has emerged as the backbone technology enabling this transformation, with manufacturing processes that integrate silicon photonics, advanced modulation schemes, and automated production flows to meet unprecedented demand. Ensuring these modules perform reliably across years of continuous operation requires rigorous validation, including thermal cycling tests that subject transceivers to hundreds of temperature extremes before deployment.

Manufacturing Economics Drive 400G Datacenter Adoption

The value proposition for 400G optical transceivers stems from three converging manufacturing realities that traditional 100G modules cannot match. First, silicon photonics fabrication enables chip-on-board packaging that reduces component count from 40 discrete elements to just 4 integrated units. This consolidation cuts assembly costs while improving thermal performance-a factor that becomes decisive when deploying thousands of modules per facility.

Manufacturing cost structures reveal the advantage. Intel's silicon photonics platform operates on 300mm wafers using standard CMOS processes at 24nm nodes, allowing optical components to piggyback on semiconductor industry infrastructure. The automated wafer-scale testing identifies defects early, pushing yield rates above 85% compared to 60-70% for traditional discrete optical assemblies. These efficiency gains translate directly to price points: 400G QSFP-DD modules now cost $400-700 for DR4 variants, delivering 4x the bandwidth of 100G modules at roughly 2x the price.

Beyond unit economics, energy consumption defines long-term operational value. Modern 400G transceivers consume 12-15W while transmitting 400Gbps, achieving approximately 30-37.5 Gbps per watt. This energy efficiency, coupled with PAM4 modulation that transmits 2 bits per symbol, enables datacenter operators to scale bandwidth without proportional increases in power infrastructure. In 2025, hyperscale data centers are prioritizing power efficiency over upfront cost when adopting 400G optical transceivers, as AI workloads and cloud services demand high throughput while minimizing energy consumption per bit.

The optical transceiver market reached $13.57 billion in 2025 and projects to $25.74 billion by 2030, expanding at 13.66% CAGR. By protocol, Ethernet accounted for 46% of the optical transceiver market size in 2024, whereas InfiniBand is projected to expand at a 17.45% CAGR. By data-rate, the 100–400 Gbps band held 38% share in 2024, yet the >400 Gbps category is advancing at 16.31% CAGR to 2030.

Silicon Photonics Manufacturing Defines Production Scalability

The manufacturing methodology for 400G optical transceivers represents a departure from traditional optical component assembly. Silicon photonics integrates multiple optical functions-modulators, wavelength multiplexers, photodetectors-onto a single chip fabricated using CMOS-compatible processes. This integration enables manufacturing scalability that discrete optics cannot achieve.

The fabrication flow comprises several stages. Waveguide structures are etched onto silicon-on-insulator (SOI) wafers, creating the optical routing infrastructure. Mach-Zehnder modulators (MZM) are then formed through doping and metallization steps. The critical challenge involves fiber-to-chip coupling: expanding highly confined silicon waveguide modes (effective diameter ~0.5μm) to match standard single-mode fiber modes (~9μm). For 400G-FR4 silicon photonics transceivers, developers achieved low-loss edge couplers rather than vertical grating couplers, which suffer from low tolerance to fabrication variations and temperature changes, particularly over the O-band spectrum (1260-1360nm).

The assembly process leverages automated passive alignment. Laser diode arrays are flip-chip bonded to the silicon photonics chip using precision pick-and-place equipment, eliminating the manual active alignment required for discrete components. This automation reduces assembly time from hours to minutes per module while improving reproducibility. The completed photonic integrated circuit (PIC) connects to a DSP chip and electrical interface through standard electronics packaging.

Manufacturing partnerships accelerate production ramp. Hengtong Rockley's joint venture deployed 400G DR4 silicon photonics modules using Rockley's technology, employing 7nm DSP chips for signal processing. The optical chipsets integrate passive and active optical components to greatly reduce optical sub-assembly needs, while introducing special designs to ease fiber coupling. Automated passive alignment processes for light sources and fiber arrays simplify manufacturing and enable mass production. Similar collaborations between integrated circuit foundries (GlobalFoundries, TSMC) and photonics startups demonstrate the technology's maturation from research to volume manufacturing.

For traditional manufacturing sectors, the production efficiency parallels semiconductor fab operations. A silicon photonics line can process thousands of transceivers per week once optimized, compared to hundreds for discrete assembly. This throughput advantage becomes vital when hyperscale operators order modules in 10,000+ unit quantities.

Form Factor Evolution and QSFP-DD Dominance

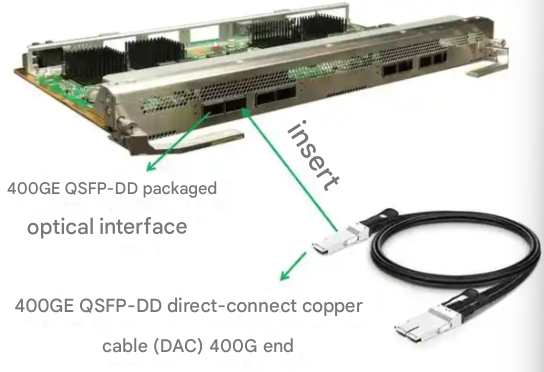

The 400G optical transceiver market centers on the QSFP-DD (Quad Small Form-factor Pluggable Double Density) form factor, which defines both physical specifications and electrical interfaces. The QSFP-DD standard employs eight electrical lanes operating at 50Gbps PAM4, aggregating to 400Gbps total bandwidth. The double-density design maintains backward compatibility with QSFP28 (100G) modules while doubling the electrical interface density.

Physical dimensions and power envelopes constrain design choices. QSFP-DD modules measure approximately 18.35mm width × 89.4mm depth, fitting into standard switch faceplates with 36 ports per 1U. The 12-15W power specification requires careful thermal management: heat sinks, airflow optimization, and efficient power conversion circuits prevent thermal throttling. Precision OT's quad small form-factor pluggable – double density (QSFP-DD) modules allow for double density QSFP interconnects through an eight lane electrical interface. The eight lanes run at PAM4 50Gbps each, allowing for 400G bandwidth effectively quadrupling the bandwidth when compared to its 4x25Gb/s QSFP28 counterpart.

Alternative form factors serve specific niches. OSFP (Octal Small Formfactor Pluggable) modules offer higher power budgets (up to 15W) and better thermal characteristics but sacrifice port density-a tradeoff acceptable for high-performance computing clusters but less suitable for density-optimized datacenter switching. QSFP112 modules using 4 lanes at 100G PAM4 represent the next evolution, though they require newer ASICs with 100G SerDes support.

The electrical interface architecture determines host compatibility. The 400GAUI-4 electrical interface utilizes four high-speed lanes, supported by PFE ASICs such as Express-5 (BX), Tomahawk-5, and upcoming Trio-7 (XT). These ASICs use 100G SERDES for native 800G support but also support 400G by using 4x100G as the electrical interface between host and pluggable optic. The 400GAUI-8 interface, using eight 50G lanes, predominates in current deployments due to broader ASIC support.

Manufacturing standardization through the QSFP-DD Multi-Source Agreement (MSA) ensures interoperability across vendors. Cisco, Juniper, Arista, and Dell switches accept compatible modules from multiple suppliers, preventing vendor lock-in and enabling competitive pricing. This openness drives the ecosystem's growth.

Optical Specifications and Distance Categories

The 400G optical transceiver encompasses multiple variants optimized for specific transmission distances, each requiring distinct optical components and manufacturing approaches. The distance categories reflect datacenter architecture: short-reach for intra-rack and rack-to-rack connections, medium-reach for campus and datacenter interconnect (DCI), and long-reach for metropolitan area networks.

SR8 (Short Reach) modules target 100m transmission over OM4 multimode fiber. These employ VCSEL (Vertical Cavity Surface Emitting Laser) arrays at 850nm wavelength, leveraging eight parallel optical channels at 50Gbps PAM4 each. The parallel optics architecture uses MPO-16 connectors, simplifying cabling but requiring fiber management for 16-strand bundles. SR8 modules cost $200-250, making them the most economical option for short distances. Manufacturing involves standard VCSEL die attachment and minimal optical alignment, contributing to low costs and high production volumes.

DR4 (Datacenter Reach 4) and FR4 (Four-wavelength Reach) modules extend range to 500m and 2km respectively over single-mode fiber. These use four wavelengths (1271nm, 1291nm, 1311nm, 1331nm) with 100Gbps PAM4 per wavelength, requiring CWDM (Coarse Wavelength Division Multiplexing) multiplexers to combine signals. In scenarios with rates above 400G, traditional DML and EML lasers incur high costs, while silicon photonics transceivers integrate multi-channel lasers, modulators, and detectors onto silicon photonics chips, greatly reducing volume and providing obvious cost advantages. Silicon photonics manufacturing proves particularly advantageous here, as MZM modulators and wavelength multiplexers fabricate on the same chip.

LR4 and ER8 variants serve longer reaches: 10km and 40km. These require more sophisticated optical components-external cavity lasers for stability, enhanced FEC (Forward Error Correction) algorithms, and higher-power optical amplifiers. The manufacturing complexity increases costs to $600-800 for LR4 and $3,500+ for ER8. Long-reach modules find applications primarily in DCI scenarios connecting geographically dispersed datacenters.

Coherent 400G ZR/ZR+ represents a distinct category. The 400G ZR optical transceiver utilizes coherent optical technology to transmit data at 400 Gbps over distances up to 120 kilometers. With Dense Wavelength Division Multiplexing (DWDM), 400G ZR allows data transmission over several hundred kilometers. Its modular structure guarantees interoperability between various vendors, facilitating easier adoption and lowering expenses. These modules integrate DSP chips performing complex signal processing, enabling transmission over existing DWDM infrastructure without intermediate regeneration.

Production Processes and Supply Chain Integration

Manufacturing 400G optical transceivers involves orchestrating multiple specialized components: silicon photonics chips, DSP ASICs, laser diodes, optical connectors, and mechanical housings. The supply chain complexity requires vertical integration strategies or carefully managed supplier relationships.

The typical production flow follows this sequence. Silicon photonics wafers fabricate at CMOS foundries (GlobalFoundries, Tower Semiconductor, or captive Intel facilities), then undergo die singulation and testing. Separately, III-V laser wafers (typically InP-based for 1310nm wavelength) fabricate at specialized compound semiconductor facilities. The PIC and laser dies combine through flip-chip bonding, forming the optical engine. This hybrid integration represents the most delicate manufacturing step, requiring <5μm alignment tolerances.

PCB assembly integrates electrical components. The DSP ASIC, which handles PAM4 encoding/decoding, clock-data recovery, and FEC processing, mounts alongside voltage regulators and passive components. High-speed electrical routing on the PCB demands careful impedance matching and crosstalk minimization-challenges that scale with data rates. The optical engine then attaches to the PCB, with fiber pigtails or receptacles completing the optical interface.

Quality control occurs at multiple stages. Wafer-level testing screens silicon photonics chips for optical loss, crosstalk, and wavelength accuracy before assembly. The completed transceiver undergoes electrical eye diagram testing, optical power measurement, and thermal cycling to verify performance across operating conditions (0-70°C for commercial grade, -40-85°C for extended temperature variants). FEC is enabled by default on optical transceivers. The FEC algorithm encodes data before transmission and decodes and corrects errors in data upon reception. For 400G optical transceivers, the industry standardized FEC code is RS(544, 514), also known as FEC119.

Regional manufacturing distribution reflects strategic considerations. Chinese manufacturers (Innolight, Eoptolink, Hisense) dominate volume production, leveraging cost advantages and proximity to hyperscale datacenter construction. Innolight continues to lead 400G datacom shipments in overall volume. Several of the largest suppliers reported substantial growth in 3Q24 as 400GbE shipments more than tripled year-over-year, though 800GbE module growth slowed following massive expansion in the prior quarter. North American and European manufacturers (Cisco, Juniper, Coherent) focus on high-value coherent modules and specialized variants, where intellectual property and technical complexity create competitive moats.

For AI datacenter applications, the supply chain faces unique pressures. GPU clusters require massive optical bandwidth for inter-GPU communication, with NVIDIA's solutions sourcing 800G modules from Fabrinet. Nvidia's 800G solutions sourced from Fabrinet represent the third-largest source of modules at the highest production speed, supporting unprecedented demands from AI infrastructure deployment. This specialized demand strains manufacturing capacity, pushing lead times and incentivizing capacity expansion across the supply base.

Performance Testing and Quality Validation Protocols

Ensuring reliable operation across millions of deployed transceivers requires comprehensive testing protocols that validate optical, electrical, and environmental performance. Manufacturers implement multi-stage qualification processes aligned with industry standards (IEEE 802.3bs for 400GbE, MSA specifications for form factors).

Optical characterization verifies transmitter and receiver parameters. Transmit optical power must fall within specified ranges (typically -2 to +2 dBm for DR4) to ensure sufficient signal strength at the receiver without causing nonlinear fiber effects. Optical extinction ratio, measuring the contrast between '1' and '0' bits, must exceed 3.5 dB for PAM4 signals. Receiver sensitivity testing determines the minimum optical power at which the transceiver achieves target bit error rates (typically 2.4×10^-4 pre-FEC for KP4 FEC).

Electrical interface testing validates high-speed signal integrity. The eight 50Gbps PAM4 electrical lanes connect to host ASIC SerDes, requiring eye diagram measurements to verify signal amplitude, jitter, and noise characteristics. Clock data recovery (CDR) circuits must lock to incoming data streams within microseconds, with jitter tolerance specified in the QSFP-DD MSA. Return loss and insertion loss measurements ensure impedance matching across the electrical path.

Environmental stress testing exposes reliability issues. Temperature cycling between -40°C and 85°C (or 0-70°C for commercial grade) verifies that optical alignment remains stable despite thermal expansion. Humidity exposure and mechanical shock tests simulate real-world installation and operation. Aging tests run modules at elevated temperatures (85°C) for 1,000+ hours to accelerate failure mechanisms and predict long-term reliability. Target failure rates specify <500 FIT (Failures In Time per billion device-hours).

Digital diagnostics monitoring (DDM) provides real-time operational visibility. QSFP-DD modules feature RoHS compliance, digital diagnostic monitoring, support for both single-mode and multi-mode fiber transmission mediums, QSFP-DD MSA compliance, PAM4 electrical and optical channels, and support for Tx/Rx speeds up to 400Gbps. The DDM interface reports temperature, supply voltage, transmit/receive optical power, and laser bias current, enabling proactive maintenance and rapid fault isolation.

Interoperability testing validates operation across different vendors' equipment. Multi-vendor laboratory facilities test combinations of switches, transceivers, and cables to ensure compatibility. This testing proves particularly important given the open MSA ecosystem, where datacenter operators often mix equipment from multiple suppliers.

Deployment Patterns in Modern Hyperscale Facilities

The architectural decisions for deploying 400G optical transceivers reflect datacenter network topologies, distance requirements, and cost optimization strategies. Modern hyperscale facilities employ leaf-spine architectures, where top-of-rack (ToR) switches connect servers and leaf switches aggregate ToR traffic to spine switches.

ToR to leaf connections predominately use 400G DR4 modules. The typical distance spans 100-300m within a datacenter building, falling comfortably within DR4's 500m specification over single-mode fiber. Using four 100G wavelengths over a duplex LC fiber pair simplifies cabling compared to SR8's 16-fiber MPO bundles. A 10,000-server datacenter might deploy 300+ ToR switches, each with 8-16 uplinks, consuming 2,400-4,800 transceivers-representing $1-3 million in optics costs alone.

Leaf to spine connections often upgrade to 800G to reduce oversubscription ratios and port counts. However, where 800G modules remain cost-prohibitive, leaf switches employ 16-24 ports of 400G FR4 modules for 2km reach to centralized spine switches. The wavelength multiplexing reduces fiber count, a significant factor when datacenter operators manage tens of thousands of fiber strands.

Datacenter interconnect (DCI) scenarios demand longer reaches. Metropolitan DCI links connecting facilities 10-80km apart deploy 400G ZR or ZR+ coherent modules. Fiber carriers such as Zayo are laying new metro rings that feed short-reach (<10 km) leaf-spine fabrics with 400ZR optics, while DWDM transport spend is set to top USD 3 billion by 2029. These coherent transceivers integrate onto existing DWDM infrastructure, avoiding dedicated dark fiber costs. The tunable wavelength capability (50 GHz or 75 GHz channel spacing) enables flexible capacity planning.

An Asian AI-focused datacenter deployment illustrates the operational model. An Asian AI-focused data center integrated 400G OSFP modules in GPU clusters. Power-per-bit savings eliminated the need for additional cooling infrastructure, reducing both CAPEX and OPEX over a 3-year period. The GPU-to-GPU interconnects demanded sustained 400Gbps throughput with sub-microsecond latency, achievable only with direct optical links replacing traditional electrical switching.

Migration strategies from 100G to 400G follow phased approaches. Initial deployments target new switch installations, avoiding disruptive forklift upgrades of existing infrastructure. As servers refresh with 100G or 200G NICs, aggregation switches upgrade to 400G uplinks. The backward compatibility of QSFP-DD ports with QSFP28 modules enables gradual transitions, with mixed-speed deployments during migration periods.

400G Transceiver Applications Beyond Datacenter Switching

While hyperscale datacenters drive the majority of 400G transceiver demand, other network segments increasingly adopt these modules for distinct application requirements.

Access network evolution creates demand for high-speed passive optical network equipment. The progression from GPON to XGS-PON and now toward 50G-PON reflects residential bandwidth growth driven by streaming video, remote work, and smart home devices. Network operators planning next-generation access architectures evaluate 100G and 400G aggregate capacity at optical line terminals. 400G transceivers designed for access applications differ from datacenter variants in several respects. Wavelength plans must align with PON standards rather than datacenter CWDM grids. Power budgets accommodate longer reach through split passive networks. Perhaps most significantly, outdoor OLT deployments face temperature extremes that exceed typical datacenter conditions, demanding extended temperature operation and more rigorous thermal cycling qualification.

Metro and regional datacenter interconnect represents another growth segment. Operators connecting facilities across metropolitan areas require 400G ZR and ZR+ coherent modules capable of 80km to 120km reach without intermediate amplification. These deployments leverage existing DWDM infrastructure, with tunable wavelengths enabling flexible capacity planning as bandwidth requirements grow. The coherent DSP complexity increases power consumption to 15W to 20W, creating thermal management challenges that differ from short-reach datacenter modules.

Enterprise campus networks present different deployment considerations. Building-to-building connections spanning 500m to 2km favor 400G FR4 modules, while backbone links within large campus switching fabrics may use DR4 variants. Enterprise IT teams typically lack the specialized optical expertise common in hyperscale operations, placing premium value on plug-and-play reliability and vendor support. Module qualification for enterprise channels often emphasizes extended warranty terms and comprehensive DDM visibility over raw cost optimization.

Storage area networks transitioning to NVMe over Fabrics benefit from 400G bandwidth for flash array connectivity. Low latency requirements for storage protocols place tight constraints on fiber path design, favoring short-reach SR8 or DR4 modules. The storage use case demands exceptional reliability given the criticality of data access, leading some storage vendors to specify enhanced thermal cycling and burn-in requirements beyond standard datacenter procurement.

Thermal Cycling and Environmental Stress Screening

Thermal cycling represents one of the most demanding qualification tests for 400G optical transceivers. The process subjects modules to repeated temperature transitions between extreme cold and heat, exposing latent defects that would otherwise manifest as field failures months or years after deployment.

Test protocols follow established telecommunications standards. Telcordia GR-468-CORE defines the baseline requirements adopted by most datacenter operators. Under this framework, transceivers undergo a minimum of 500 temperature cycles between the rated operating extremes. For commercial-grade modules rated 0°C to 70°C, chambers transition between these boundaries at controlled rates. Extended temperature variants rated from -40°C to 85°C face more severe stress, with some hyperscale customers specifying 1000 cycles for critical infrastructure deployments.

The thermal profile parameters influence test effectiveness. Ramp rates typically range from 10°C to 15°C per minute, fast enough to induce thermal stress but controlled to prevent shock damage unrelated to actual operating conditions. Dwell times at temperature extremes allow components to reach thermal equilibrium, typically 15 to 30 minutes depending on module thermal mass. A complete 500-cycle test therefore requires approximately 350 to 500 hours of chamber time per batch.

Several failure mechanisms emerge during thermal cycling. The most common involves coefficient of thermal expansion (CTE) mismatch between materials. Silicon photonics chips, printed circuit boards, ceramic substrates, and metallic heat sinks all expand at different rates when heated. Repeated cycling creates cumulative mechanical stress at interfaces. Solder joints connecting laser diodes to substrates prove particularly vulnerable. Microscopic cracks form and propagate with each cycle until electrical or thermal resistance increases beyond acceptable limits.

Fiber alignment stability presents unique challenges for 400G silicon photonics modules. Edge-coupled designs rely on sub-micron positioning between fiber arrays and waveguide facets. Thermal expansion can shift this alignment, increasing insertion loss. Well-designed modules incorporate compliant mounting structures that accommodate dimensional changes while maintaining optical coupling. Thermal cycling validates these designs under realistic stress conditions.

PAM4 signaling used in 400G transmission exhibits greater temperature sensitivity compared to earlier NRZ modulation. The four amplitude levels require tighter signal-to-noise ratios, and thermal drift in driver circuits or modulator bias points can compress eye openings. DSP algorithms compensate for temperature-dependent variations, but compensation has limits. Thermal cycling with concurrent optical measurements verifies that modules maintain acceptable bit error rates across the full temperature range.

Temperature grades serve different deployment scenarios. Commercial-grade transceivers (0°C to 70°C) target climate-controlled datacenter environments where HVAC systems maintain stable conditions. These modules represent the bulk of hyperscale deployments. Extended temperature modules (-40°C to 85°C) address edge computing, outdoor cabinets, and telecom central offices where ambient conditions vary more widely. The more demanding temperature range requires additional design margins in optical and electrical circuits, typically adding 15% to 25% to module cost.

Burn-in testing complements thermal cycling by operating modules at elevated temperature (typically 85°C) under electrical bias for extended periods, commonly 168 to 1000 hours. This accelerated life test reveals infant mortality failures related to semiconductor defects or contamination. Combined with thermal cycling, burn-in provides comprehensive screening that reduces field failure rates to target levels below 500 FIT.

Frequently Asked Questions

What makes 400G optical transceivers suitable for datacenter applications?

400G optical transceivers deliver 4x the bandwidth of 100G modules while consuming only 2-2.5x the power, providing superior energy efficiency critical for hyperscale operations. Silicon photonics manufacturing enables cost points of $400-700 for DR4 modules, making them economically viable for mass deployment. The QSFP-DD form factor maintains high port density (36 ports per 1U switch faceplate) while backward compatibility with QSFP28 simplifies migration from existing 100G infrastructure.

How does silicon photonics manufacturing differ from traditional optical component production?

Silicon photonics integrates multiple optical functions-modulators, multiplexers, photodetectors-onto a single chip using CMOS-compatible semiconductor processes. This contrasts with traditional approaches that assemble discrete optical components requiring manual alignment and hermetic sealing. The integration reduces assembly costs, improves reliability through fewer components and connections, and enables wafer-scale testing that identifies defects before packaging. Manufacturing throughput increases from hundreds to thousands of units weekly.

What distance options exist for 400G datacenter transceivers?

SR8 modules cover 100m over multimode fiber for intra-rack connections, DR4 extends to 500m over single-mode fiber for within-datacenter links, FR4 reaches 2km for campus interconnects, LR4 spans 10km for datacenter-to-datacenter connections, and coherent ZR/ZR+ variants achieve 80-120km for metropolitan area DCI. The appropriate variant depends on datacenter architecture, with most hyperscale facilities standardizing on DR4 for the majority of connections.

How do 400G transceivers support AI and machine learning workloads?

AI training clusters require sustained high-bandwidth, low-latency communication between GPUs for gradient synchronization during distributed training. 400G optical transceivers provide the necessary bandwidth (400Gbps per port) with sub-microsecond latency, eliminating network bottlenecks in GPU-to-GPU communication. The energy efficiency (30-37.5 Gbps/watt) proves essential as AI clusters already consume megawatts of power-adding inefficient networking would worsen thermal and power challenges.

What quality validation processes ensure transceiver reliability?

Manufacturers implement multi-stage testing including wafer-level screening of silicon photonics chips, optical power and extinction ratio measurements, electrical eye diagram validation, temperature cycling between -40°C and 85°C, mechanical shock testing, and 1,000+ hour aging at elevated temperatures. Target failure rates specify <500 FIT (Failures In Time per billion device-hours). Digital diagnostics monitoring provides real-time visibility into temperature, optical power, and laser bias current, enabling proactive maintenance.

How does PAM4 modulation enable 400G transmission?

PAM4 (4-level Pulse Amplitude Modulation) encodes 2 bits per symbol using four distinct signal amplitude levels, compared to NRZ modulation's single bit per symbol using two levels. This doubles the data rate without requiring proportional increases in baud rate or bandwidth. For 400G transceivers, eight electrical lanes run at 50 Gbaud PAM4 (100Gbps per lane), aggregating to 400Gbps. The tradeoff involves reduced signal-to-noise ratio, requiring forward error correction and digital signal processing to maintain acceptable bit error rates.

What is thermal cycling testing and why does it matter for 400G transceivers?

Thermal cycling testing repeatedly exposes transceivers to temperature extremes, typically transitioning between -40°C and 85°C for extended temperature modules or 0°C to 70°C for commercial grades. The process subjects components to mechanical stress from thermal expansion and contraction, revealing latent defects in solder joints, fiber alignment mechanisms, and semiconductor die attach. A standard qualification requires 500 to 1000 cycles, with each cycle lasting approximately 45 minutes. Modules passing thermal cycling demonstrate resilience against the temperature variations encountered during shipping, installation, and long-term operation in datacenter environments where localized hot spots can create significant thermal gradients.

What temperature range do 400G datacenter transceivers support?

Commercial-grade 400G transceivers operate from 0°C to 70°C case temperature, suitable for climate-controlled datacenter environments. Extended temperature variants support -40°C to 85°C for telecom, outdoor, and edge computing deployments where ambient conditions vary more widely. Industrial applications sometimes require operation across the full extended range with enhanced reliability guarantees. The operating temperature grade affects component selection, design margins, and qualification testing rigor, with extended temperature modules typically costing 15% to 25% more than commercial equivalents.

How do 400G transceivers for access networks differ from datacenter modules?

Access network transceivers, including those supporting next-generation PON architectures, differ from datacenter modules in wavelength planning, power budget requirements, and environmental specifications. PON applications use wavelength assignments defined by ITU-T standards rather than datacenter CWDM grids. Longer reach through passive split networks demands higher optical power budgets. Outdoor OLT deployments require extended temperature operation and more stringent thermal cycling qualification to handle exposure to weather extremes. These factors combine to create distinct product designs optimized for access network deployment conditions.

Are 800G transceivers harder to qualify than 400G modules?

Yes, 800G transceivers present more demanding qualification challenges. Power consumption increases from 12-15W for 400G to 18-23W for 800G within the same QSFP-DD form factor, creating higher thermal density. Component junction temperatures approach limits more quickly during thermal cycling. Some 800G modules carry reduced maximum ambient temperature ratings compared to 400G equivalents. The industry is developing linear pluggable optics (LPO) architectures that move DSP functions to the host system, reducing module power to 8-10W and easing thermal constraints at the cost of increased system design complexity.

Key Takeaways

Silicon photonics manufacturing reduces 400G optical transceiver production costs through CMOS-compatible processes and automated assembly, with DR4 modules now priced at $400-700 compared to $1,000+ just three years ago

QSFP-DD form factor dominates 400G deployments, offering 36 ports per 1U with eight 50Gbps PAM4 electrical lanes while maintaining backward compatibility with 100G QSFP28 infrastructure

Distance variants serve specific datacenter architecture needs: SR8 for 100m intra-rack, DR4 for 500m within facilities, FR4 for 2km campus links, and coherent ZR for 80-120km metropolitan DCI connections

Manufacturing quality protocols validate optical power specifications, electrical signal integrity, environmental stress resistance, and long-term reliability with target failure rates below 500 FIT

Hyperscale datacenter deployments prioritize power efficiency (30-37.5 Gbps/watt) over upfront costs, with AI GPU clusters demonstrating how 400G optics eliminate infrastructure expansion needs through superior energy performance

References

Cignal AI - Over 20 Million 400G & 800G Datacom Optical Module Shipments Expected for 2024 - https://cignal.ai/2025/01/over-20-million-400g-800g-datacom-optical-module-shipments-expected-for-2024/

Link-PP - 400G Optical Transceivers: Power Efficiency Driving Hyperscale Data Center Adoption in 2025 - https://www.link-pp.com/blog/400g-hyperscale-efficiency-2025.html

Mordor Intelligence - Optical Transceiver Market Size, Competitive Growth & Forecast - https://www.mordorintelligence.com/industry-reports/optical-transceiver-market

ResearchGate - 400G Silicon Photonics Integrated Circuit Transceiver Chipsets - https://www.researchgate.net/publication/339766855

FiberMall - Silicon Photonics (SiPh) optical transceiver: Q&A - https://www.fibermall.com/blog/silicon-photonics-optical-transceiver.htm