Optical Capacity Planning: How to Future-Proof Your Fiber Network

Apr 30, 2026|

Apr 30, 2026| The datacom optical component market grew over 60% in 2025, crossing $16 billion in revenue (LightCounting via Introl). That number matters for one reason: every organization competing for 400G and 800G modules is drawing from the same supply pool. Teams that plan optical network capacity proactively secure allocation, pricing leverage, and installation windows. Teams that react, upgrading only after spine links hit saturation, end up paying expedited rates for modules that arrive after the GPUs they were supposed to connect.

The unplanned re-cabling is usually the bigger hit. We see it regularly: an organization orders 400G QSFP-DD transceivers, installs them, and discovers that half the existing cross-connect paths can't sustain PAM4 signaling at the required bit error rate. The fiber was fine at 100G. It isn't fine anymore. That fiber replacement, not the transceivers, becomes the dominant cost line on the upgrade project.

Fiber Plant Readiness Assessment: Start Here, Not at the Transceiver Catalog

The first step in any data center fiber plant readiness assessment is measuring what you actually have, not what the installation spec said you'd have.

PAM4 encodes two bits per symbol instead of one, which doubles throughput per lane but dramatically tightens noise margins. Fiber plants that performed fine at 100G routinely fail at 400G speeds because the cumulative insertion loss from connectors, splices, and bends eats into the reduced signal margin that PAM4 demands.

Here's what that looks like in practice. A 400G SR4 link budget per IEEE 802.3cm allows roughly 1.5 dB of total connector insertion loss. A single contaminated connector typically adds 0.3–0.5 dB. Three dirty connectors in a cross-connect path, which is not unusual in a production environment with regular patching activity, consume the entire connector loss budget before you account for the fiber attenuation itself. At 100G NRZ, that same path would have passed with 1–2 dB of margin to spare. We've measured this repeatedly across Cisco, Arista, Juniper, and Dell switch platforms in our test lab: contamination that causes zero observable effect at 10G produces intermittent CRC errors at 400G PAM4 lane rates that are difficult to diagnose in production because they don't trigger hard link-down events.

For multimode environments, the distance constraints tighten considerably at each speed generation. A 10GBASE-SR module reaches 300 meters over OM3; at 400G SR8, you're looking at 70 meters on the same fiber per IEEE 802.3cm. If your leaf-to-spine runs exceed that, the 400G QSFP-DD upgrade path requires either single-mode migration or architectural changes to shorten physical distances, both of which take months to execute and should be planned well ahead of transceiver procurement.

Choosing the Right Speed Tier: The Decision That Defines Your TCO

Optical network capacity planning for data centers comes down to a three-variable problem that doesn't appear on any vendor datasheet: supply chain maturity, your workload trajectory, and how much of your total upgrade cost sits outside the module price.

400G delivers four times the bandwidth of 100G at roughly 2.5 to 3 times the module cost, a meaningful improvement in cost per gigabit. But in the 400G-to-800G migrations we've supported, module cost has consistently been the smaller line item. Switch chassis, power and cooling infrastructure, cabling plant remediation, and operations team training collectively outweigh it. Planning on module price alone is how organizations end up with transceivers that technically work but a network that operationally doesn't.

QSFP-DD maintains backward compatibility with QSFP28 cages, which means you can install 400G-capable switches and continue running existing 100G modules during a staged migration. That backward compatibility lets you spread capital expenditure across multiple budget cycles while immediately gaining the platform benefits of newer switch silicon, a detail that matters when you need to justify the upgrade to a CFO who wants to see ROI inside 18 months.

800G transceivers double bandwidth again via 8×100G PAM4 lanes in OSFP or QSFP-DD800 form factors, with modules drawing 14–20W depending on reach variant (IEEE 802.3df). The supply chain dynamics differ materially from 400G: fewer qualified suppliers, less competitive pricing pressure, and longer lead times. Industry deployment data consistently shows 90+ day allocation cycles for 800G modules in volume (Vitex).

If you're building or expanding AI training infrastructure where GPU idle time from network bottlenecks costs thousands per hour, deploy 800G on spine links now. The module premium pays for itself within months through reduced GPU idle cost, and the 2×FR4 breakout to existing 400G leaf infrastructure protects your migration path.

If you're refreshing a campus core or WAN edge that will carry traditional enterprise workloads for the next 3–5 years with no AI-adjacent traffic in the planning horizon, 400G's mature ecosystem delivers better five-year TCO. The competitive supplier base currently prices 400G meaningfully below early-lifecycle 800G on a per-gigabit basis.

If your workload mix is uncertain, and that's most mid-market data centers, default to 800G-capable switch platforms but populate with 400G transceivers initially. You get the platform headroom without the module premium, and you upgrade ports individually as traffic demands it.

1.6T transceivers are entering early production targeting hyperscale and NVIDIA-specific applications, with OSFP-XD gaining standardization backing from the Open Compute Project (OCP). Volume pricing won't materialize before 2027. Design your fiber plant and switch chassis to accommodate 1.6T, but don't let it delay an 800G deployment your traffic demands today.

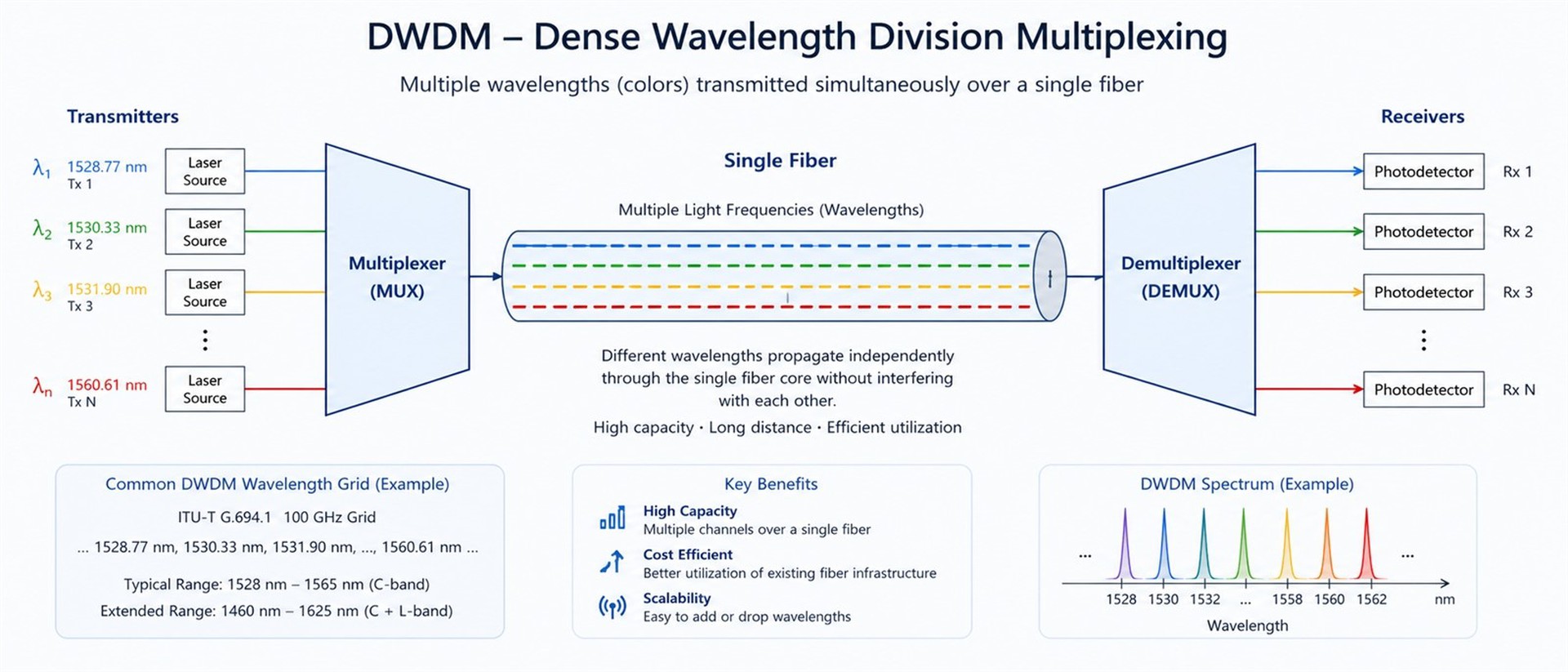

DWDM as a Capacity Multiplier

One dimension that nearly every competitive guide on this topic skips: you don't always need faster transceivers to get more bandwidth from existing fiber.

For metro DCI links under 80 km where you have dark fiber access, DWDM capacity expansion beats laying new cable on cost in nearly every scenario we've deployed. A properly designed C-band DWDM system supports 80+ independent channels on a single fiber pair. Extending into the L-band doubles that. Research into multi-band transparent optical networks has confirmed this approach is often cheaper than lighting additional dark fibers while delivering comparable capacity growth (ScienceDirect).

We deployed this for a financial services client connecting a primary data center to 12 branch offices across a metro area. The original infrastructure was 10G point-to-point on leased dark fiber. They were running out of wavelengths, not fiber capacity. The solution: FB-LINK CWDM-10G modules on an 18-channel passive mux/demux at each endpoint, providing dedicated 10 Gbps wavelengths to all 12 locations plus 6 spare channels for future expansion, without touching a single strand of the physical plant. Total deployment time was under three weeks per site, versus the 4–6 month timeline their construction contractor quoted for additional fiber pulls.

The real barrier to DWDM deployment isn't the technology. If your team is Ethernet-only, budget 3–6 months for skills transfer. The exact training path depends on whether you're deploying passive CWDM, amplified DWDM, or extending into L-band, and each option has different implications for your fiber loss profile and amplification requirements.

LPO, CPO, and What They Mean for Your Planning Timeline

Two emerging technologies will reshape optical capacity planning methodology over the next three years, and your infrastructure decisions today need to account for both, even though neither changes what you should deploy right now.

Linear-drive Pluggable Optics (LPO) eliminates the power-hungry DSP inside the transceiver module, connecting linear TIAs and drivers directly to the switch ASIC. The result: 30–50% lower power consumption and latency reduction below 15 nanoseconds versus conventional retimed modules (LightCounting via Introl). For dense GPU clusters where every watt of optical power is a watt not available to compute, LPO changes the capacity-per-rack equation meaningfully. Standardization is advancing through OIF, with initial deployments in hyperscale scale-up networks expected in 2026–2027.

Co-packaged optics embeds the photonic engine directly onto the switch ASIC package, cutting optical-layer power from roughly 15 pJ/bit to approximately 5 pJ/bit, a 3× efficiency gain demonstrated by Broadcom's Bailly 51.2T CPO platform. But CPO eliminates field-replaceable optics, which means a photonic-layer failure can force replacement of the entire board. That trade-off keeps CPO confined to hyperscale operators building custom silicon through at least 2027 (more on pluggable vs. CPO trade-offs).

The practical implication for planning: design your power and cooling infrastructure to handle 15–20W per 800G module today. When LPO matures, you'll reclaim 30–50% of that power budget without changing the physical infrastructure. That recovered power headroom is your free capacity expansion path.

Phased Deployment: The 400G-to-800G Migration Sequence

Start the spine upgrade when any spine port sustains utilization above 70% during peak traffic windows, not at 80%, because at that point you're already experiencing microbursts that cause buffer overflow, and the procurement lead time for 800G allocation will extend your congestion window by 90+ days.

Spine-first is standard practice for Clos fabrics. Upgrading spine to 800G while keeping leaf at 400G works cleanly via breakout: a single 800G 2×FR4 port connects to two 400G FR4 ports, doubling spine bandwidth without touching the leaf layer. The pluggable module architecture that makes this possible is also the reason you can execute the upgrade with zero downtime: pull one spine link at a time, rebalance ECMP, upgrade, verify DDM readings, move to the next.

Critical Procurement Detail

Order optical modules at minimum 90 days before your GPU or server delivery date. Industry deployment data consistently shows that transceiver procurement, not technology, is where 800G migration plans fail in execution. GPUs arrive, optical infrastructure doesn't, and idle compute costs accumulate. If you're planning a 500+ port deployment, secure allocation 120 days out and confirm vendor lead times monthly. Supply chain volatility at 800G speeds remains higher than at 400G.

What Goes Wrong: Lessons from Production Deployments

AWS published a detailed account of how their 100G-to-400G transition initially increased interconnect failure rates across tens of millions of optical links, a counterintuitive result for a technology upgrade. The root cause wasn't the transceivers themselves but the combinatorial explosion of multi-vendor interoperability: multiple switch ASICs × multiple DSP vendors × multiple module suppliers created a testing matrix that no single qualification cycle could fully cover (AWS).

Most enterprises can't replicate AWS's vendor leverage. But the lesson scales down: test your specific switch-to-transceiver combinations in your own lab environment before production deployment, using Pre-FEC BER and VDM telemetry as acceptance criteria, not just link-up/link-down. We've caught a specific class of failures through this process: modules that pass basic qualification but show marginal Rx sensitivity under thermal stress, triggering Pre-FEC errors above 1e-4 only at sustained production load. That pattern appears most often with certain DSP-to-switch ASIC combinations. Our pre-validated compatibility data for Cisco, Arista, Juniper, and Dell platforms is available on request.

Building future-proof fiber infrastructure also means getting the overprovisioning margin right. Corning recommends 25–100% fiber overprovisioning based on demand uncertainty (Corning). That range is too wide to be actionable without context, so here's how we segment it:

Scenario A

If your 3-year capex plan is approved and your facilities footprint is fixed, 25–30% excess fiber is sufficient. You know where the racks will be; you're provisioning for density increases, not topology changes.

Scenario B

If you're in a growth phase with open-ended compute expansion but a defined campus, 50% is a reasonable floor. Reserve the upper end, 75–100%, for greenfield conduit runs where pulling additional cable later would mean breaking concrete. Stranded fiber is a real cost, but it's almost always cheaper than future construction.

Building Your Optical Capacity Plan

Five decisions, in sequence. Each one gates the next.

1. Baseline your current fiber plant.

Measure insertion loss and return loss on every path you plan to upgrade, not from the installation records, but with current OTDR and power meter readings. If any cross-connect path exceeds the connector loss budget for your target speed tier (1.5 dB for 400G SR4, tighter for 800G), remediate before ordering transceivers. Our test lab can run link budget verification against your specific switch platform if you need a second set of eyes.

2. Forecast bandwidth demand by network tier.

Spine, leaf, and DCI links grow at different rates. AI training clusters may double spine utilization in 12 months; enterprise campus cores rarely grow faster than 15–20% annually. Match the forecast to the tier, not a single blanket number.

3. Select the speed tier per network layer.

Use the three-scenario framework above. For current-generation transceiver options across 100G through 800G, cross-reference against your fiber plant baseline from step 1. The module you want is only useful if your cabling can carry it.

4. Sequence the deployment spine-first.

Trigger at 70% sustained spine utilization. Use breakout optics to bridge the gap between upgraded spine and existing leaf. Plan zero-downtime cutovers by upgrading one link at a time with ECMP rebalancing.

5. Align procurement to compute delivery.

90-day minimum lead time for 800G allocation. Confirm monthly. If your deployment exceeds 500 ports, extend to 120 days and diversify suppliers. Single-source risk at 800G volume is real.

If you're working through steps 1–3 and need help matching fiber plant conditions to transceiver specifications, that's a conversation worth starting before the procurement cycle locks in. Our 400G standard models ship from stock; custom-coded variants take 7–10 business days.

FAQ

Q: What is optical capacity planning?

A: It's the process of forecasting fiber network bandwidth requirements and aligning transceiver technology, cabling infrastructure, and deployment timelines to meet demand without overinvesting or creating bottlenecks.

Q: How do I assess if my fiber plant supports 400G or 800G?

A: Run a link budget assessment covering every connector, splice, and bend. PAM4 signaling has tighter noise margins than NRZ, so fiber plants that worked at 100G often fail at higher speeds.

Q: Should I deploy 800G now or wait for 1.6T?

A: Deploy based on current traffic demand, not future product availability. Design infrastructure to accommodate 1.6T, but don't delay an 800G deployment your workload requires today.

Q: What's the most common optical upgrade mistake?

A: Focusing on transceiver speed while ignoring fiber plant readiness. Unplanned re-cabling during migration typically costs more than the modules themselves.

Q: Where does DWDM fit in capacity planning?

A: DWDM multiplies capacity on existing fiber by adding wavelengths, a cost-effective alternative to laying new cable, especially for metro DCI links under 80 km with dark fiber access.