High Speed Optical Interconnect: Data Center Solutions

Apr 27, 2026|

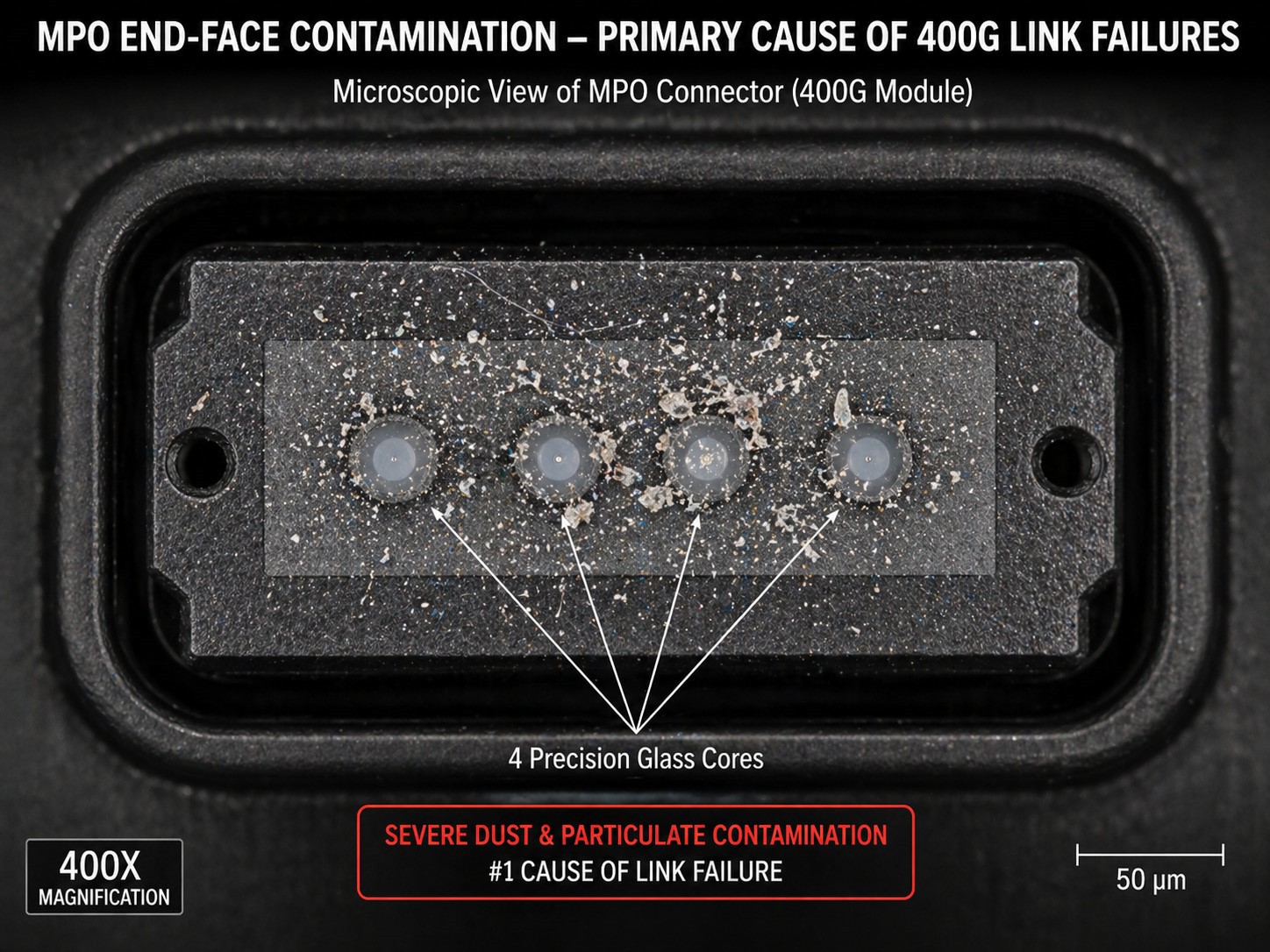

Apr 27, 2026| Last quarter we had a customer ship back forty 400G DR4 modules claiming random link flaps on their Arista 7060X5 switches. Before we even opened the RMA paperwork, our test engineer asked one question: did you inspect the MPO connectors before installation? They had not. We sent the modules through our full regression, eye diagrams clean, BER below 1E-13 across all four lanes, DDM readings nominal. Then we asked them to send photos of their trunk cable end-faces under a fiber microscope. Every single connector had particulate contamination. Forty modules, zero defects. The problem was dust.

We see some version of this every month. High speed optical interconnect deployments at any scale hit the same wall, and the numbers back it up: somewhere between 65% and 70% of all 400G and 800G link failures trace back to connector contamination, not to transceiver faults. (IEEE 802.3 field data via AscentOptics) We bring this up first because it frames how we think about the entire interconnect decision. The module is almost never the weakest link. The physical layer around it is.

Your Traffic Pattern Decides Your Optical Interconnect Architecture

Everyone starts with the part number. QSFP-DD or OSFP, SR or DR, multimode or single-mode. We do it too. But the deployments that went well for our customers all started somewhere else: what does the traffic actually look like?

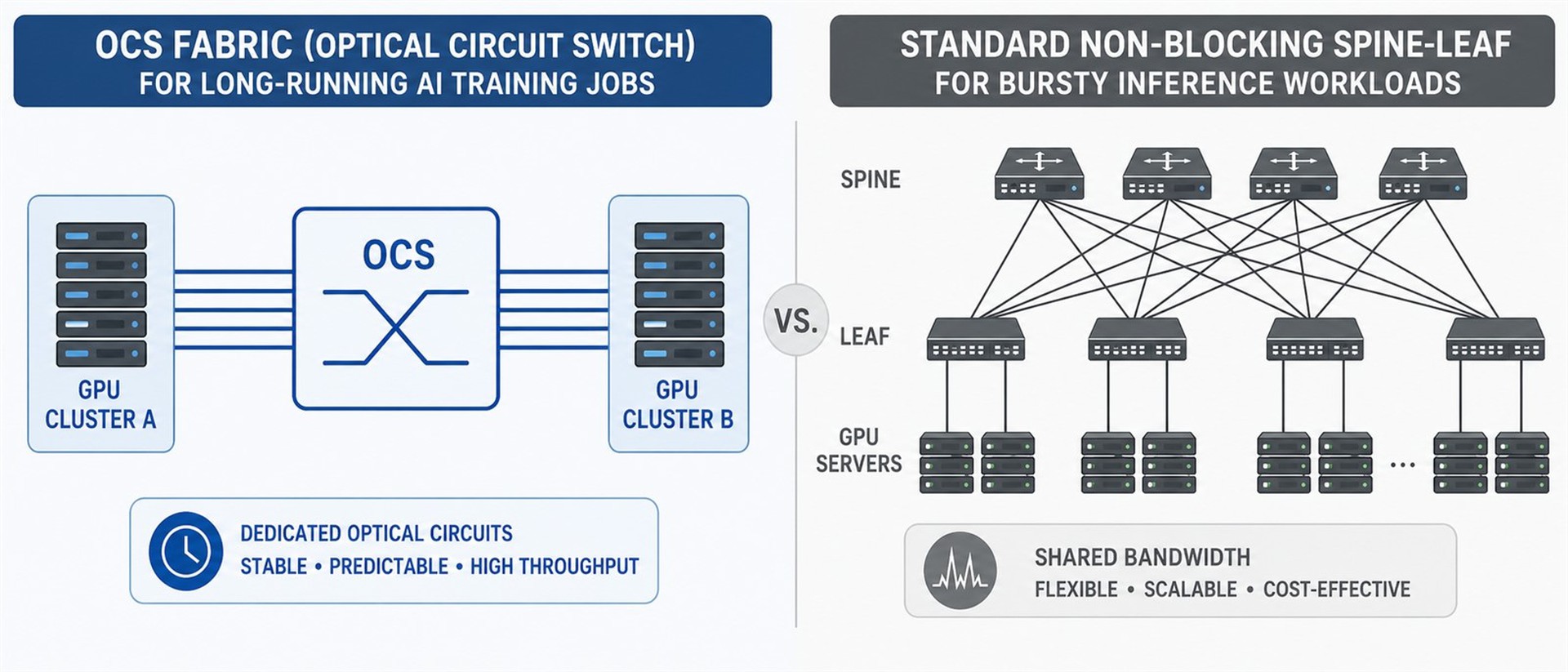

Large-scale AI training generates all-to-all GPU communication that turns out to be surprisingly predictable over timescales of minutes to hours. Google exploits this with optical circuit switches in their Jupiter network, reconfiguring physical light paths between racks instead of switching packets. Their published results from a decade of production use: 41% power reduction, 30% lower capex, and 50x improvement in fabric uptime versus their prior Clos architecture. (Google SIGCOMM'22) Those numbers are real, but they belong to a company that spent somewhere between $500 million and $1 billion on OCS infrastructure over five years. We've had a handful of mid-size customers ask us to evaluate OCS feasibility for their environments. In every case, once they ran the numbers at sub-500 node scale, the capital requirements outweighed the reconfiguration benefits, and they stayed with conventional spine-leaf using pluggable modules.

Inference flips the equation. Traffic is bursty and unpredictable at the flow level, and latency tolerance is close to zero. You can't reconfigure optical paths on a per-request basis. What you need is a consistently overprovisioned fabric with deterministic latency, which pushes you toward pluggable transceivers in a spine-leaf topology where every link is always lit. We sell modules into both scenarios, and the engineering conversations are completely different. Training cluster buyers want to know about aggregate bandwidth per rack and power per bit. Inference buyers ask about tail latency and what happens when a link goes down.

For traditional enterprise and colo environments below hyperscale, cost per port dominates and backward compatibility with existing fiber plant matters more than raw bandwidth density. We've tested our 400G QSFP-DD modules across 14 switch platforms including Cisco Nexus 93600CD, Arista 7060X5, and Juniper QFX5220. In those environments, the dominant concern isn't speed. It's whether the module gets recognized by the switch firmware without manual override commands.

The 800G Dead Zone That Catches Engineers Off Guard

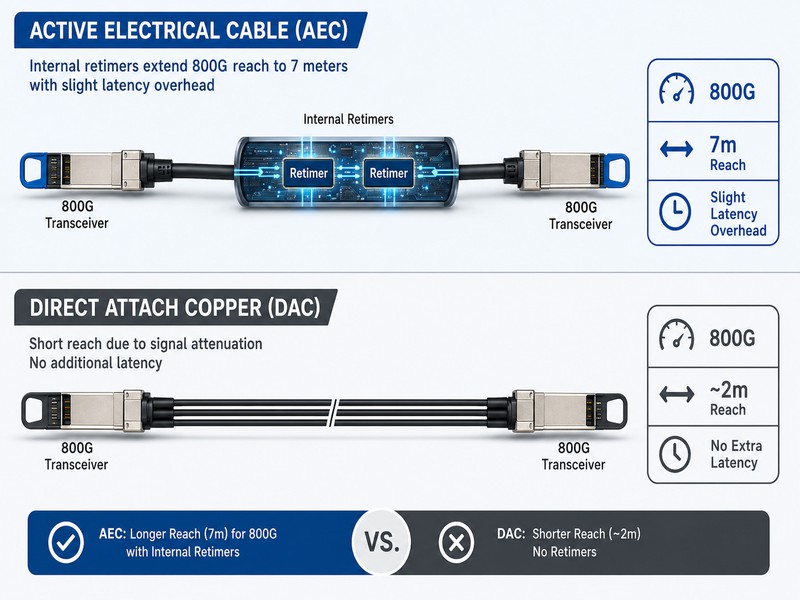

At 400G, picking an interconnect was a two-step process: measure the distance, choose copper or fiber. Passive DAC covered 3 to 5 meters comfortably. 800G broke that. Each lane runs 112G PAM4. Copper loss at those frequencies roughly doubles versus 400G, and the result is a hard ceiling around 2 meters for passive cable.

We learned this the expensive way. An early customer ordered our 800G passive DAC assemblies in 3-meter lengths based on their 400G rack layout. Link training failed on over 60% of the ports. The copper wasn't defective; the physics just wouldn't allow it. They switched to AEC for the 3 to 5 meter runs and pluggable optical modules for everything beyond, and the deployment stabilized within a week. Since then, we've stopped taking orders for passive 800G DAC above 2.5 meters and added a deployment distance advisory to our order confirmation process.

AEC now owns the 3 to 7 meter gap. Digital retimers regenerate the signal electrically with no optical conversion, which keeps cost down but adds latency. KP4 FEC alone puts 50 to 100 nanoseconds on every hop at 800G, and the retimer stacks more on top. We measured total added latency at 85 to 110 ns on the AEC assemblies we currently ship. For spine-leaf fabric links, that overhead is invisible in application performance. For tightly coupled GPU clusters it's a different story. Based on profiling data from three customer deployments running H100 nodes, if your training job's communication overhead already sits above 15%, that extra hundred nanoseconds per hop across multiple switch tiers starts compounding in NCCL AllReduce operations.

Beyond 7 meters, pluggable optical transceivers for 800G are the only viable path. The physical layer demands tighten considerably here. End-to-end insertion loss budgets under IEEE 802.3ck land below 1.5 dB for most 800G reach classes, and every mated MPO connection must stay under 0.35 dB. We've seen installed fiber that passed certification at 100G show PMD values two to three times above its rated specification after several years of compression in cable trays, consistent with what Juniper's network research team reported in 2023. Our standard recommendation before any 800G transceiver deployment: run OTDR and PMD characterization on every existing fiber segment. Not a sample. Every segment. The cost of re-pulling two trunk cables is a fraction of the cost of debugging intermittent link flaps for six months.

CPO vs LPO vs Pluggable: Where Each Technology Actually Stands in 2026

Co-packaged optics will change how data center switching infrastructure is built. We say this as a pluggable module manufacturer, so take our view as informed rather than neutral.

At OFC 2026, reliability data on CPO prototypes showed failure rates potentially lower than traditional pluggable modules. Without mechanical insertion cycles or exposed connector surfaces, the dominant failure modes of pluggable modules simply don't apply. Broadcom's Bailly 51.2T CPO switch platform demonstrated roughly 70% lower power draw on the optical layer compared to equivalent pluggable configurations. (DataMIntelligence Optical Interconnect Report) NVIDIA showed CPO-integrated switches at GTC 2026 targeting scale-up deployment in the 2027 to 2028 window.

Our position: if you are not a hyperscaler building custom switch silicon, pluggable optics are your only deployable option through at least 2027. CPO needs board architectures most switch vendors haven't shipped yet, connector standards that aren't finalized, and a whole new playbook for handling failures you can't fix by pulling a module. The ecosystem for general enterprise procurement doesn't exist yet. We've had two prospective customers in the past year delay their 400G-to-800G upgrades waiting for CPO. Both eventually came back and placed pluggable orders after their bandwidth gaps became production incidents. We wrote about the engineering rationale for this position in more detail on our pluggable transceiver architecture analysis.

LPO sits in a different space. Removing the DSP from the module cuts out the single most power-hungry component, responsible for up to half of total module power draw. The result is 30 to 50% lower consumption and up to 15 nanoseconds less latency. We started fielding LPO-specific RFQs in late 2025. Three of the four came from customers building single-vendor GPU clusters on NVIDIA Spectrum-X. None operated multi-vendor fabrics, which tells you everything about where LPO works today. If your network mixes switch vendors, LPO isn't compatible with your environment. If you run a single-vendor AI cluster, it might be the smartest upgrade available, and we expect to have LPO-ready modules in qualification by mid-2027.

What 800G Thermal Margins Mean for Module Selection

The thermal math at 800G catches people off guard. High speed optical interconnect power density at this generation creates problems that simply didn't exist at 400G. A 64-port switch fully loaded with 800G modules at 16W each draws roughly 1kW in transceiver power alone, before the switch ASIC's own 400 to 500W. That's 1.4 to 1.5 kW per switch. Eight switches in a spine layer puts you over 11 kW from network equipment alone, in racks that were often provisioned for 8 to 10 kW total.

Juniper Networks explicitly warns that third-party modules with high power draw, particularly coherent ZR and ZR+ types, can cause thermal damage to host equipment, with liability falling on the user. (Juniper Networks 800G Optics FAQ) That's not an argument against third-party optical modules. It's an argument for knowing exactly what you're plugging in. In our thermal cycling qualification, we test every 800G module design at sustained 85°C junction temperature for 2,000 hours and track laser bias current drift as the primary aging indicator. Units that drift above 38 mA get pulled from the production line. At 800G densities, the difference between a module drawing 14W and one drawing 18W determines whether a rack stays within thermal envelope or triggers shutdown alarms at 2 AM. Getting the spec wrong has always been annoying. At these power levels, it's expensive.

We ship pluggable modules from 1G SFP through 800G OSFP, and we test against major switch platforms. We keep records on what works and what doesn't. If you need a compatibility check against a specific switch environment, or want thermal and power-class specs for high-density 800G racks, our engineers run those conversations every week. Our 800G OSFP and QSFP-DD800 transceiver page has specs, reach-class options, and sample request forms for every module we ship.